Articles on Science

Articles on Science

- Major discoveries – Timeline

- Laws of Physics

- Articles on Science

- Ethane in the Sky

- Rotation of the Earth

- Life on Mars

- See me, touch me

- Going Mobile

- Making paper

- Two Hundred Years Ago

- Long distance travel

- Sounds good to me!

- Sounding good

- Atoms and the bomb

- Nuclear binding

- Nuclear fission and fusion

- The chain reaction

- Producing power from fission

- Destination Mars

- D D T (dichloro-diphenyl-trichloroethane)

- Artificial Gravity

- Illusions

- E = mc2

Major discoveries – Timeline

Laws of Physics

Articles on Science

Ethane in the Sky

On March nights, we were treated to the glorious spectacle of Hyakutake spreading across the sky. Astronomers from all over the Earth turned to look at the comet. They were well rewarded. The Hubble Space Telescope got the first-ever look from the earth at the icy nucleus that inside a comet. (It is just around 2 kilometers in size.) For the first time, a comet was seen giving off X-rays faint, but because Hyakutake passed only 15 lakh kilometers from the earth. They were caught by an X-ray telescope. Other astronomers have been busy looking the spectrum of the comet. This usually gives a lot of information about the compounds that are in the comet (Jantar Mantar, Sep-Oct ’95). Michael Mumma and Michael Di Santi found methane in the comet, forming almost 1% of the comet’s ice. Until now, methane has only been found in the planets (such as Saturn) and their moons (like Titan). Happy at their success, Mumma and DiSanti then looked even more carefully at the spectral then even more carefully at the spectral lines of the comet. They found the lines of ethane, a compound which has not been found in space before. Ethane is another 1% of the comet’s ice. They are looking at the lines again. Who knows, they might even find something like naphthalene!

Rotation of the Earth

How fast does the Earth rotate? In 24 hours, obviously, for that is how we define our day. That is how fast the surface of the Earth, where we live, rotates. What about other parts of the earth. That seems silly question. Because if, say, from 100 kilometers below the surface, the inside of the earth rotated at a different speed, the friction between that and the outside would split the crust apart. So the whole Earth has to rotate at the same speed. But that question is not silly after all. Have you experimented with a rotating egg? Below the crust of the Earth lies the mantle, and below that lies core. The core of the earth is believed to be iron. It is liquid up to some depth, but the innermost core is solid iron. It must be something worth seeing, huge iron crystal 2400 kilometers in diameter, as massive as the Moon. That gives the Earth the freedom it needs.

The solid core, separated core, separated from the outer hulk of the Earth by the liquid in between, can rotate at a different speed. This was pointed out by two American scientists, Gary Glatzmeier and Paul Roberts. They did some calculation and suggested that the inner core should be rotating slightly faster than 24 hours. Geologist Xiaodong Song and seismologist Paul Richards have found that Glatzmeier and Roberts were absolutely right. The inner core goes around in 1 second less than a day. To put it more dramatically, the inner core that was below the western tip of Africa in the year 1900 is under our feet today. How did they find this out? The seismic waves of earthquake (Jantar Mantar, Nov- Dec ’93) travel right through the Earth. When a tremor takes place in Antarctica and is recorded in Alaska, the seismic wave has traveled through the Earth’s core. Because this wave is going eastwards, it should be carried slightly faster through the core. Another wave, going westwards, for instance when an earthquake in New Zealand is recorded in Norway, will be slower. Checking such earthquake data, Song and Richards found that the westward waves were indeed a fraction of a second slower than the eastward ones, and from that they calculated the speed of the core’s rotation. Several questions arise. As is known to physicists, such a difference in speed gives rise to a current (in this case, of billions of amperes) flowing between the solid inner core and the liquid outer core. Such a current must be causing a magnetic field as well. In this linked to the magnetic field of the Earth?

Life on Mars

Four and a half billion years ago, a rock was formed on Mars by some volcanic process. Half a billion years later, this rock was broken into smaller pieces by a meteorite impact nearby. Some ground water also entered the rock. 16 million years ago, an asteroid hit Mars somewhere near where this rock was. The impact threw pieces of the rock into space. One 2 kilogram piece of rock orbited the Sun until 13,000 years ago, when it came close to the Earth. This piece crashed onto an Antarctic glacier. Over 13,000 years, it reached the Allan Hills region of Antarctica, buried inside the ice. In 1984, this meteorite was discovered and named ALH84001. A large number of people worked out this history of the meteorite that we just narrated.

This year, a team led by David McKay of the American space organization NASA, suggested that there seemed that there seemed to be signs that life may have existed on this rock in some bygone era: The meteorite has some organic molecules, of the same family as naphthalene (which is used in mothballs). When bacteria decay, such compounds are produced. Many meteorites do have such compounds. The meteorite has iron oxide (magnetite) of the sort which some bacteria on Earth secrete. It has iron sulphide, which is produced by some anaerobic bacteria (those that don’t use oxygen). The meteorite has some balls of carbonate material, which may be formed by some material, which may be formed by some living thing. On the other hand, almost all earth bacteria are 100 times larger than this material. The meteorite may contain very small fossils (less than hundred millionth of a millimeter). Nanobacteria are this size.

In 1961, another meteorite was found to have signs of life. But soon these were discovered to be grains of pollen and particles of furnace ash. The signs of life turned out to be from Earth itself. This could be the case for the Antarctic meteorite too. What makes scientist more hopeful is that some of these items mentioned are within cracks, and the cracks could only have been formed before the meteorite came to rest in Antarctica. So maybe, just maybe, the signs of bacterial life that we see are from when the rock was on Mars. In 1976, the Viking spacecraft failed to find any such bacteria on Mars. But maybe they landed in the lifeless part of Mars. Or maybe bacteria were present on Mars millions of years ago, but aren’t there now. Scientists are looking at ALH84001 very, very carefully. And even US President Bill Clinton has promised support for a new NASA spacecraft to Mars.

See me, touch me

There is a popular belief that loss of one sense strengthens others. Scientists have now found some evidence for this. Normally, sight and touch are handled by different areas of the brain- the first in the visual cortex and the second in the sensori motor cortex. A team of scientist led by Norihiro Sadato in Japan found that in blind people, the visual cortex may be taken over to perform other tasks. When blind people used their fingers to scan text written in Braille, scientists measured an increase in blood flow (and hence brain activity) in the visual cortex. When scanning meaningless dots on page, brain activity was restricted to the sensorimotor cortex. On the other hand, for sighted but blindfolded people (trained in Braille), weather the page contained Braille or meaningless bumps, the act of scanning the page with the finger only activated the sensorimotor cortex. This suggests that how pathways in the brain get “wired” during learning is much more complex than previously imagined.

Going Mobile

Telecommunication signals are sent, using different frequencies. Usually a “base” or carrier frequency is chosen, and the message itself “shifts” or modulates the carrier frequency. If many frequencies- a large bandwidth- is available for transmission, several messages can be multiplexed and sent in parallel. we took a look at the older telecom systems: the telegraph and telephones. In the May-Jun ‘96 issues, we examined the working of fancier things like fax and small PABXes. This issue looks recently introduced technology of mobile telecom equipment. Pagers and cellular phones are now available most major cities. These mobile systems attempt to solve one big disadvantage if the telephone system. Your telephone instrument is fixed to a particular location- you cannot move it around with you while you are traveling.

The instrument is connected to the exchange through wires. It is this connection that’ sets your telephone number. The number is used to switch incoming calls to you and to bill you for outgoing calls. What if you to call someone who is not near a telephone? In large hospital or a36.jpg factory, it is sufficient for person to carry a beeper. To contact him, the operator at the switchboard dials the number of the beeper. The beeper then makes a sound or vibrates to alert the person that he is needed. He goes to the nearest internal telephone and calls up the switch board to get the message. This is called paging. Such systems have been in use for long time. Paging systems use frequencies in the Very High Frequency (VHF) range- around 100 Megahertz (MHz or million cycles per second). Since they are very low power there is no interference with systems installed elsewhere and the same frequencies can be reused. Also, since only a few tones (beeps) are sent, the bandwidth needed by these systems is very small (only around 1 kilohertz). However these are local systems which cannot be accessed by people outside the particular building complex- a call can be made only from the switchboard.

Making paper

- Mechanical Pulper

When making paper from wood, the logs are trimmed and their bark removed. The wood is then ground by rotating grindstones in the presence of water to form wood pulp. Fiber quantity can be controlled by varying the water supply. For example, if the water supply is reduced so that more heat is generated, longer fibers are obtained. Mechanical pulping is typically used to produce pulp for newsprint.

- Chemical Pulper

In a chemical pulper logs of wood are sliced and chipped. Chips of the appropriate size are pressure cooked in the presence if either an acid or a base. The size of the chips, the strength of the acid or base and pressure applied, determines the fineness of the pulp.

- Pulp preparation

The pulp is washed to remove impurities, by rotating rapidly in cleaner so that rubbish collects at the bottom. Pure pulp comes out through an outlet at the top. Bleaching agents are added to make the paper extra white. Now the pulp can be pored out into flat sieves and dried. This crude paper can be shipped to different mills.

- At the paper mill

Pulp arrives to a paper mill in the form of sheets. These are disintegrated in water. Loading materials, usually china clay or chalk, are added to the paper to make it less transparent. Sizing agents, like resin, make the paper resident to soaking, so that one can write on it with water-based ink. Dyes and pigments are added to lend colors. The mixer thoroughly mixes everything. A beating machine now takes over. The beating affects the fibers of the pulp. This stage determines the sort of paper that will be produced. The end produce is called stock. The stock comes onto a continuous belt made of wire. Sideways shaking of the belt improves the way the fibers bond. Water is drained out from below the wire mesh. A huge roll rides on the upper surface of this web. By improving s design on this roll, a watermark can be impressed on the web. The web is further squeezed of its water as it goes through a pair of rollers. It then passes over steam-heated iron cylinders where most of the water is removed. If the cylinders are polished, the end product gets a glazed finish. Finally the paper is wound onto reels.

Pulp factsFrom the time we get up in the morning and reach out for the newspaper at our doorstep to the time in the night when we put aside a book or a magazine and go to sleep, we encounter paper in various forms-school books, bus tickets, paper money, office –files, letters- everything crucially dependent upon the existence of paper. It is so much a part of our life that a world devoid paper unimaginable. The invention of paper is credited to Tsai Lun, a Chinese courtier, who made the first paper about 2000 years ago from old finishing nets and rags. The Chinese made paper by boiling rags and plants, constantly stirring it to make a thick pulp.

A sieve was then dipped into the pulp and removed horizontally with a layer of pulp on it. Excess water drained away through the mesh of the sieve. The layer of pulp was then pressed and dried to obtain paper. In principle, the same process is used today . The word paper comes from the Egyptian papyrus a water reed. It was used as writing material more than 5000 years ago by the Egyptians. Papyrus is different from paper: it is made of players of reed placed across each other (like a mat), pressed and then rags or plants are completely realigned (like mattress). The bonds that form between the cellulose are chemical- hydrogen bonds. For its weight, paper is stronger than steel. The Sumerian civilization (in present-day Iraq) before the Egyptians used clay tablets. In Tamil Nadu, palm leaf manuscripts were in use. Parchment (made of material like tree bark) was used in Europe until the 14th century. Of the copies if the Gutenberg Bible; the first printed book, 180 were printed on paper and 30 on parchment. None of these are boded chemically like paper is. Writing was quite difficult on all the other materials. A hard style had to be used to carve out the letters. The pulpiness of paper means that something quite soft, like a pencil, can be used to write on it. Paper-making spread to the west when Chinese paper makers were captured by the Arabs in the 18th century. It reached Europe in the twelfth century when Jean Montgolfier escaped from the Saracens (in Syria) and returned to France to set up paper mill.

He learned this art while working as a slave in a paper mill. While the basic process, making pulp and drying of that pulp to make paper remains the same, paper-making had seen technological advances. So much so that these new advances were exported back to china from the west- the technology has truly made a complete circuit of the globe! Paper- making, which started as a small scale industry confined to homes, is now almost completely mechanized. The most common type of paper-making machine is the Fourdrinier, named after two brothers who built the machine in 1803. Paper became so popular for writing that there was soon a shortage of rags! Newspapers had already started way back around the year 1600, but now they became widespread.

A more copious source for pulp was looked for. The French naturalist, de Maurer, observed that wasps chew wood and make it into a pulp with their saliva. The pulp is spread out in the shape of a comb and when it dries, a reasonably tough, paper- like nest is formed.

Nowadays most paper is made from wood pulp. Your school notebook, newspapers, magazines, posters, envelops- all are made from wood pulp. Jantar Mantar is printed on glazed newsprint, which is also made from wood pulp. The glazing comes from polishing the paper when it is made into rolls. Wood pulp fibre is longer than that of rags. Rags and plant material continue to be used for making bond paper, currency notes, drawing paper and blotting paper. Before the 19th century, paper was used only for writing.

Do you know when envelops were first made? In 1841. Paper bags came in 1850. Large, machine-made paper boxes appeared in 1894. (Cartons are 20th century invention) Have you wondered whether bread has always been wrapped in paper? The practice dates to 1910. What has made paper-making a huge industry is the realization that by treating the paper differently during the process, very different qualities of paper can be produced. To wrap, say, Amul butter, you require paper which is dense, smooth and greaseproof. This is done by adding wax while making paper. If paraffin is added, the paper becomes a bit stiffer, which is useful for papers cups.

By using a resin instead, paper suitable for wrapping bread is produced. A different resin produced paper for lining bottle caps! During paper-making, a coating can also be introduced on to the paper. By coating one side with abrasive, you get sandpaper. Coating one side with gum is useful for postage stamps, stickers, and so on. By appropriate treatment, the paper can be made resident to tearing, water vapour, gases, oil, grease, insects, rats….It is any wonder that paper is the man- made product which is made in the largest quantities?

K. V. Subrahamanyam Max Planck Institute, Saarbrucken

Two Hundred Years Ago

The Europeans built forts, how their armies were used by local kings to fight their neighbours. The foreigners- especially the English- became more and more powerful. By two hundred years ago, the British began to feel that their trade was paramount, and to protect their trade it would be necessary for them to rule India. What was the Indian trade so important to the English that they decided to rule over a country thousands of kilometers away?

- The industrial revolution

First the British got rich by selling Indian spices and Indian- made clothes in England. This was the time Industrial Revolution began in Britain. Textile factories were set up in England. For these textile factories, cotton was bought from Indian or American farmers and sold in England. Clothes made in Manchester were sold in Calcutta, Bombay and Madras. Other raw material such as indigo, opium, sugarcane, tea and coffee, were grown in India and taken away to Britain.

To take away these raw materials, the British began to build railway lines and roads across India. For these, they needed iron, coal, wood and so on. To mine these minerals and take away wood from the forests, they had to keep officers in different parts of the country. To have control over what was grown here, it was not very convenient to have to go through an Indian prince, however accommodating he might be to the British. The East India Company began to take an interest in ruling India.

- Dissatisfaction

Many Indian rulers realized what was happening and fought the British. Hyder Ali and Tipu Sultan of Mysore, Mahadji Scindia of Gwalior and Nana Phandnavis of Pune were among them. But they were all states and finally lost to the Englishmen.

So the English occupied much of India. Where other kings ruled, an English Resident was placed to watch over their activity. The Britishers had to fight a lot retain their rule. But still things were not peaceful for them. The Indian kings and princes revolted because the British would decide whom to make a king and when to remove him. But the farmers and landowners also revolted because the tax (in the form of crops) taken from them was heavy and very strictly enforced. If the tax were not paid, the land would be auctioned and sold.

The tribals living in the forests of India revolted because the British entered what had until then been their territory. Their forests were being taken away from them for laying railway sleepers. Both Hindus and Muslims were worried that the English would destroy their religion and make everybody into Christians.

- 1857

The biggest opposition to the English was in 1857, when a large portion of north India rose against the foreigners. This revolt was started by Indian troops in the English army, but soon kings, landowners, farmers, tribals, workers all took part in this rebellion.

On the evening of 10 May 1857, in the cantonment of Meerut, sepoys who had fought for the British and defeated the Indian kings were now annoyed with the Englishmen. They were not treated well in the British army. Even their wages often delayed. On top of that, there were rumours that the new bullets issued to them used cow and pig fat.

That very night, the rebel troops marched for Delhi. By morning, they had crossed the Yamuna and reached Delhi. The Mughal emperor Bahadurshah Zafar was imprisoned in Red Fort by British. The sepoys declared him their emperor and convinced him to revoke British rule. “We will again establish the Mughal empire”. That was their cry.

As the news spread, there were riot in Meerut. Huge crowd attacked Englishmen’s house and offices. Many Britishers were killed. In other places too- in the cantonments of Aligarh, Mainpuri, Bulandshahr, Attock, Mathura, the sepoys rebelled.

In spite of this huge success of the revolters, the English slowly brought the situation back under their control. The main reason why this uprising lost to the British was that each region fought the English separately. There was no combined strategy. So the English could deal with the revolters of each region separately. Another reason was the lack of modern weapons with the Indian ruler’s armies. Modern guns and cannon, and ammunition for them, came from abroad. How long could the rebels, with their old guns, their bows and arrows, spears and sword, stand against the British? It took more than a year, but finally the British crushed the revolt.

- The Indian empire

The 1857 rebellion was biggest threat to their strength that the British faced. After defeating it, they changed their policies. In 1858, many Indian zamindars were given their own jagir .They were promised that their property would be protected. Pandits and maulvis were assured that British government would not interface with India’s would be taken into the administration of the country.

In 1857 the British had seen their empire slipping out of their hands. Now they tried to make sure that by pleasing the important people in India, by coming to an understanding with them, they could continue to rule. Their grip on India was tightened so that they could stay on for next 90 years.

Long distance travel

Imagine that you live on a planet with out an atmosphere. (Perhaps we have colonized the Moon.) You will be using spacesuits when you step out of your airtight buildings. In such a world, there would be no air friction. How could you use this?

Let us say you want to send a package to a friend on the other side of the planet. You would hold it at a certain height and if you gave it is the right speed horizontally you would put it into a circular orbit around the planet at that height. Your friend would then catch it as it came around to where she was living. (You would need to tell her it was coming, for she wouldn’t always be waiting for packages from you.)

The only energy you would spend would be what it cost you to accelerate the package to the right speed. Even this energy could be regained at your friend’s end with an appropriate stopping mechanism. Along the orbit no fuel is needed: the package cruises along like any satellite that orbits the Earth. It is in free fall.

Of course, the package could be your vehicle. This suggests a system of space ways instead of roads. Buildings on the planet would be kept off the space ways along which vehicles in planetary orbits would be whizzing along. Transport would cost next to nothing. The orbits could be as low as you want. In principle, they could be so low that they are almost touching the ground. But of course, to prevent encountering hillocks on the way, they could be a bit higher.

This fizzix fact (fantasy?) tells you something. On Earth, most of the fuel we spend in operating our vehicles goes into combating air friction (assuming the roads are good!) Whenever we decelerate or stop, the kinetic energy of the vehicle is simply dissipated into friction.

Let’s look at the physics in some detail. An object moves in a straight line with a constant velocity if no force acts on it. A force will change its velocity. Even to just change the direction in which an object is moving, without changing its speed, a force must be applied. The gravitational pull of a planet on a satellite is the force which continuously keeps changing its direction of motion, bending its orbit into a circle.

- On a small planet

An object of mass m moving with a speed v in a circular orbit of radius r is under going an acceleration. The gravitational pull of the planet is GMm/r2, where M is the mass of the planet and G is a constant.

For a low, hugging –the –ground orbit on an Earth-like planet, this speed is roughly 7.9 km/second, a prohibitively large speed. It is so large that an accelerating ramp for vehicles that are accelerated at one g would be 3000 km long! When an object is dropped on the surface of the earth, it falls with an acceleration of 9.8 m/sec2. This acceleration is referred to as one g. Twice this acceleration would be two g’s.

Inside a vehicle moving at two g’s a person would be pressed against her seat with a force that would be twice her weight. In a vehicle moving at 5 g’s, a Person would be squashed against her seat by a force that is 5 times her weight. A human being accelerated by a few g’s will find it very uncomfortable and will not be able to stand it for long. Very high g’s will be lethal. So this method of transport may be impractical.

On a Moon-like planet though, v is smaller, about 1.7 km/sec. Even this will be too large for human passengers, but might be okay for packages which can withstand large g’s. On smaller bodies like asteroids, we would certainly use this mode as the required velocity will be small enough to reach.

- K.S Balaji

The other school

Sounds good to me!

In a bus, in theatre, during your school assembly, and almost everywhere else, people can be seen singing songs or listening to songs. Indeed, singing and listening to songs is a favorite pastime for many of us. So how do we hear these songs? How do the songs come out of the loudspeakers (or speakers, as we sometimes call them)? How do the speakers work? What is a “good quality” speaker? In each of our ears, we have an ear drum. When the ear drum vibrates, our brain interprets it as sound. Why does the ear drum vibrate? Well, when the air pressure close to the ear drum increases, it pushes the ear drum inwards and when the air pressure close to the ear drum decreases; it pulls the ear drum outward. Sound traveling through the air creates these waves of compressed air (higher pressure) and rarefied air (lower pressure). So when these waves reach our ear, our ear drum also moves in and out at the same rate of frequency as these waves, and our brain recognizes the sound.

Speakers use the same principle to generate sound, except that the process is in reverse. A membrane or diaphragm in the speaker vibrates in and out and pushes and pulls the air near it. The pulsating movement of the air then travels outward as sound. indeed it is the same principle that we use when we talk. Our vocal chords vibrate and generate sound. The term “speaker” refers to something a bit more that what we usually associate it with. The typical cone that we see inside a radio or type recorder is actually termed a “driver”. One or more such driver and the enclosure/box they are mounted in together constitute a “speaker”.

Why do we need more than one driver? The answer is simple, sound has a range of frequencies and a single driver may not do good job of reproducing all of the frequencies. So while a single driver can be used, usually two or three drivers are present in each speaker, each of which is optimized to a smaller range of frequencies. For example, high frequency noises, usually from stringed instruments, are better reproduced in small sized drivers. These small sized drivers are called “tweeters”. Their small size allows them to more easily sustain high frequency vibrations. Larger sized drivers may be more sluggish and less effective in sustaining high frequency vibrations. Mid-range frequencies, such as human voices are better reproduced in medium sized drivers which are simply called “mid range” drivers.

Finally, low frequency noises, which also correspond to large wavelengths, such as those from deep sounding drums, are best reproduced using large drivers, called “woofers”.

The box or enclosure the drivers are placed in also plays an important role in making sure that the drivers are held securely in place. Well designed enclosures prevent unnecessary vibrations interfering with the sound from the speakers.

What makes the drivers vibrate? Electricity that is encoded to carry the sound signal arrives at the drivers through wires. At the drivers, the electricity encoded with sound keeps changing the polarity of an electromagnet present at the center of the driver. These changes in polarity occur in a manner consistent with the sound encoded in the electricity. The electromagnet at the center of the driver is surrounded by a permanent magnet. Due to the interaction between the changing polarity of the electromagnet and the permanent magnet, the electromagnet moves back and forth. Attached to the electromagnet is the membrane that makes up the cone of the speaker and hence the cone vibrates as will and sound is produced.

So what is a good quality speaker? One important aspect of the quality of speaker is its ability to accurately reproduce sound. In order to find out if a speaker is of good quality, it is important to have a good source of sound signals. So let us assume that we have a well recorded CD, in other words we are assuming that the CD has an accurate copy of the original music.

So if this CD is played on a CD player, the speaker that makes the music sound as lose to the original recording as possible is the best speaker. In other words clarity of sound reproduction over the range of audible frequencies is the primary measure of the quality of the speaker. You can find this out easily. If you have a CD that has a song which has several instruments (stringed instruments, drums, etc.) and some human voices as well, in better quality speakers you will be able to hear each of the instruments clearly and also hear the voices clearly. In poorer quality speakers, some of the instrument will get hidden in the sounds of the other instruments, the sounds of the drums will tend to reverberate and over all the various sounds will feel mixed up and unclear.

You will still hear the song but it will be a poor quality sound reproduction. Generally speaking, most speakers do a better job o f reproducing mid range and higher frequencies. Even “cheap” single driver speakers will usually do a reasonable job of reproducing human voices and stringed instruments. However, very few speakers do a good job of producing deep sounding drums typical cheap speakers will reverberate while trying to reproduce drums. Sometimes you can hear this clearly as cars drive by with music being played at a high volume – you might notice the speakers jarring when the drums occur. Good speakers will produce a good, clean, single beat each time the drum is hit. Poorer speakers will continue to vibrate and produce additional sounds which are not there in the recording even after the drum beat. So far we have looked at the clarity of sound reproduction.

Over and above the clarity of sound reproduction, different speakers sound different based on the material used for construction of the etc. for example, some speakers may have a tendency to sound slightly shrill, while others may have a more polished sound. In some ways judging speakers can be quite subjective. What seems pleasing to my ears may not seem as pleasant to yours. While it is easy to agree on the clarity of different speakers, variation in tone (shrillness etc.) can be difficult to grade. The tone of some speakers may be better suited for certain types of music as well. So you taste in music and you listening preferences make you the ultimate judge of the speaker that sounds best to you! How speakers work is one aspect of the audio world. But how about “THX”, “surround sound”, “Dolby pro-logic” etc. What are all those? Some other time!

Dr. Prathap Haridoss,

Indian institute of Technology Madras,

Chennai

Sounding good

- Sufal Swaraj

Bundesanstalt fur material forcing and prufung, Berlin, Germany

All of us listen to the some or other type of music. We also hear the news and other programs on radio. Most of us take sound for granted not once we wonder how and where does sound come from. Why do your two hands make sound when you hit them together hard. Or why does the neighbor’s window –glass make the thrashing sound when it breaks when you hit it by your cricket ball.

Let us try to understand sound. Sound is any disturbance that can travel through medium like air or water and then be heard by human ear. But what are these disturbances and why do they travel through a medium? When a body vibrates, the vibration causes a periodic disturbance in the surrounding air or any other medium. These disturbances are pressure waves. It means the vibration leads to periodic pressure changes in the medium.

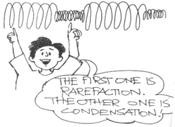

Suppose you have guitar with you and you pluck one of the strings of it. It does produce sound, how does it do so? The movement of the strings in one direction pushes the molecules of the air, which are just before it. This produces crowding of the molecules in that region .And when the string moves back from its regional position, it leaves behind a space with less number of molecules. Meantime the molecules which ware compressed or crowded together transmit some of there energy to the other molecules around them. The molecules return back to there original place, which had become less dense earlier. In other words, the vibratory motion set up by the guitar string causes alternately in space a crowding together of them molecules of air (a condensation) and an emptying out of the molecules (a rarefaction).

Taken together a condensation and a rarefaction make up a sound wave. This kind of a wave is called longitudinal, because the vibratory motion is forward and backward along the direction that the wave is following. Because such a wave travels by disturbing the molecules, a medium is absolutely essential for sound waves to travel. We know that sound waves cannot travel through vacuum.

How is it that we hear these sound waves? The vibrations or condensation and rarefaction cause sufficient vibrations on our eardrums for our brains to ‘hear it’. Our ears do not hear all the vibrations. Sound is generally audible to the ear if the frequency (number if vibrations per second) of sound waves lies between 20 and 20000 vibrations per second. The range varies from one individual to another. Sound waves with frequencies less than those of audible waves are called subsonic; those with frequencies above the audible range are called ultrasonic.

For us sound is what we can hear. But for scientists, sound is considered to be the waves of vibratory motion, weather or not they are heard by the human ear.

Now one can ask how fast these vibrations travel. Well, the velocity of sound is not a constant. It is different for different media. In the same medium, the velocity of sound is different at different temperature. Sound travels more slowly in liquid than is solids. Since the ability to conduct sound is dependent on the density of the medium, solids are better conductors than liquids; liquids are better conductors than gases. The velocity also varies with temperature. But normally the speed of sound is considered to be 344 meters per second.

Sound waves can be reflected, refracted and absorbed as light waves can be. The reflection of sound waves can result in an echo. Reflection of sound wave back to its source in sufficient strength and with a sufficient time lag to be separately distinguished is called an echo. If a sound wave returns within 1/10 sec, the human ear is incapable of distinguishing it from the original one.

Let us do quick calculation to find out how far the reflecting surface must be to take longer than 1/10 seconds. The velocity of sound is 344 m/s at normal room temperature. Can you confirm that reflecting wall must be more than 16.2 m from the person for an echo to be heard? In this case the sound requires 1/20 sec to reach the reflecting surface and the same time to return.

Echo is very useful, especially if you are Bat! Bats navigate by listening for the echo of their high frequency cry. Scientists have designed many instruments using principle of echo. For example, sonar and depth sounders work by analyzing the echo time lag of sounds waves. Radar sets broadcast certain waves of particular frequency, pick up the portion reflected back by objects, and electronically determine the distance and direction of the objects.

When a surface reflects sound it partially absorbs and partially reflects the energy. As the process is repeated the sound becomes weaker and weaker and eventually ceases. The absorption of sound by objects is also the reason why don’t listen an echo in hall full of people. But you listen to it when it is empty. What is that makes the sound of music pleasant when an expert is playing? If somebody who does not know the instrument plays, why is the sound thought of as ‘noise’? Musical sounds are distinguished from noise in that they are composed of regular, uniform vibrations as in case of the expert playing. Noise is nothing but collection of irregular and disordered vibrations. But nowadays, composers use musical sounds as well as noises!!

One musical tone distinguished from another on the basis of pitch, intensity or loudness, and quality. Pitch describes how high or low a tone is and depends upon the rapidity with which a sounding body vibrates, i.e., upon the frequency of vibrations, the higher the tone. The intensity or loudness of a sound depends upon the extent to which the sounding body vibrates, i.e., the amplitude of vibration.

A sound is louder as the amplitude of vibration is greater, and the intensity decreases as the distances from source increases. Loudness is measured in units called decides. The sound waves given off different vibrating bodies differ in quality. A note from a saxophone, for example, differs from a note of the same pitch and intensity produced by a guitar.

Atoms and the bomb

The atomic tests that have been carried out to Pokharan in Rajasthan have initiated a lot of discussion among both scientists and the general public. What exactly is an atomic explosion and how does it work? Do you know that the very name itself is misleading? What occurs is actually a nuclear reaction. However, when the first atomic tests were conducted by American scientists more than 50 years ago, it was thought that most people would not understand the meaning of the word nuclear. So they called it an atomic bomb, or, more simply, just an atom bomb. Only at the end of the last century was it established that atoms do exist. Ernest Rutherford in England showed that atoms have a small central core (about 100,000 times smaller than the atom itself) called the nucleus. The nucleus is positively charged and surrounded by negatively charged particles called electrons.

Most of the atom is really empty. The electrons orbit around the nucleus, just as the planets orbit the sun. The nuclear is not a blob, but it is made up of protons and neutrons. While the proton is positively charged (equal and opposite to the electron), the neutron has no charge. However, a proton and a neutron are both around 2000 times heavier than an electron. All interactions which cause matter to be formed in large amounts (for example, liquids, solid pieces of metal), are governed by properties of the electrons. They energy due to these electric interactions is not very large: for example, you can melt ice or make steam just by cooling or applying heat in an ordinary kitchen at home. You do not need big laboratories for these processes.

The energies involved in nuclear reactions (involving protons and neutrons) turn out to be millions of times larger than these electronic energies. Almost within two decades of the discovery of the nucleus, it was clear to scientists that atoms had, trapped within their nuclei, enormous sources of energy. The clock was already ticking away towards the first atom bomb.

-Usha Didi

Nuclear binding

To understand this is a bit better, however we must first go into a little more detail about how exactly the protons and neutrons (together called nucleons) are bound together into the nucleus. Let us examine this concept of binding s little more closely.

- The nucleus

Opposite charges do attract, and so it seems possible that the positively charged nucleus does hold together the negatively charged electrons in an atom. But what about the protons themselves? If they are all stuffed together in a tiny space and all carry positive charge, they should repel each other. Even before the atom is formed, then, the nucleus would break up since the electrical force between the like-charged protons would cause them to fly apart. What, then, prevents the nucleus from breaking up? This question was answered when a new force had been identified. Here we can not give you all the details of these truly exciting discoveries. We will merely rapidly sum them up. It was discovered that, apart from the well-known electrical force and gravitation (which allows you to stay on Earth), there are two more forces that have to do with the stability of nuclei in atoms. The first force is called the strong force. It is responsible for holding the nucleus together.

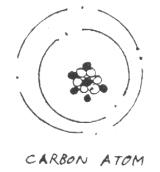

The other force is called the weak force and is responsible for breaking up of nuclei in special kinds of atoms. The weak force is of interest when we talk about some forms of radiation and radioactivity, words which are now familiar through daily use in the field of medicine. It is the strong attractive force between neutrons and protons that overcomes the repulsion due to the proton’s electrical charge and causes the nucleus to remain together. Let us see what that means for a typical atom, say carbon. Carbon has 6 electrons, so it must have 6 protons to balance the electric charge. However if you find the mass of the carbon atom (how would you go about doing such a thing?!), it turns out that it is as heavy as 12 hydrogen atoms, that is, it is as though there are 12 protons in it. The explanation is that there are 6 neutrons in the carbon nucleus with mass very similar to that of protons. The 6 protons in the nucleus provide the positive charge to attract the 6 electrons outside, while the 6 neutrons provide the attractive strong force to prevent the (positively charged) protons inside the nucleus from flying apart due to their mutual repulsive electric force.

Since electrons are very much lighter than protons or neutrons, the mass of atoms is more or less determined by the number of nucleons. If there are A number of nucleons in an atom, it is said to have a mass A in atomic units. As you get to larger (and heavier) nuclei, it is seen that more and more neutrons are required in order to overcome the enormous repulsion between the large numbers of protons in the nucleus. Here, there are more neutrons than protons in heavier nuclei. This simple fact will play major role in the understanding of the working of an atom bomb or a nuclear reactor. Consider a bowl of water standing on a table. The sides of the bowl act as a barrier, preventing the water from falling out. If the bowl is accidentally shaken, the water sometimes spills out. Some energy is given to that bowl in the process of shaking. Part of this energy is transferred to the water inside. The more energetic water can then overcome the barrier of the bowl walls and spill out.

Once it crosses the barrier, it falls out due to gravity (its own weight). We can think of the protons and neutrons (forming nuclear matter) as a kind of liquid, like water, confined within a “bowl” which is the nucleus. Just as surface tension holds a drop of water together, the strong nuclear force holds the nucleons (both protons and neutrons) together inside a drop-shaded nucleus. This (very early) model of the nucleus is due to Niels Bohr. Of course, there is no hard edge to the nucleus (analogous to the walls of the bowl). What act as the wall is an energy barrier formed as a result of the forces between the nucleons? This is called the binding energy.

Because of the energy barrier, the nucleons are confirmed within the nucleus. To break through this barrier (resulting in the breaking up of the nucleus itself) needs energy provided by shaking to spill the water in the bowl. The smaller the binding energy, the easier it is to break up the nucleus- the less stable the nucleus is. It turns out that nuclei of different atoms have different degrees of stability. A convenient way of looking at this is to consider the average binding energy B of each nucleon in the nucleus. We see from the figure that B is almost constant for most nuclei, being about 8 Me V. Typical energies involved in chemical reactions are of the order of a few electron-volts (ev); an Me V is a million times larger. Hence the energies involved in nuclear transmutations are millions of times larger. If we look at the binding energy curve more closely, we see that it peaks around A=60, which corresponds roughly to the Iron nucleus.

The B value gradually decreases as A becomes larger than 60. This is precisely the characteristic that is exploited in nuclear fission which leads to both nuclear power generation as well as the atom bomb.

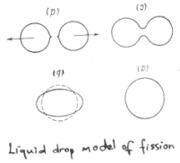

Nuclear fission and fusion

What exactly is meant by fission? The word is borrowed from biology, where fission means the breaking up of a living cell into two roughly equal parts. Imagine we have a nucleus with 200 nucleons and we can somehow break it into two equal parts. This can be thought of as happening in two steps.

1.The nucleus is broken up into 200 individual nucleons.

2.100 nucleons each are bound together into two nuclei.

The first step involves breaking up of a nucleus and needs energy to occur. How much energy does it need? We take a look at our binding energy figure. It tells us that for A=200, the binding energy per nucleon B is roughly 7 Me V. Hence the total binding energy = B×A = 7× 200 = 1400 Me V. This is the amount of energy we need to supply for this to happen.

The second step involves forming of two nuclei. Each releases energy. Observe that at A= 100, the B released is roughly 8 me V. Hence each nucleus releases 8A= 8×100 = 800 Me V so that two nuclei release 2×800 = 1600 Me V.

So the process, where a nucleus with 200 nucleons breaks up into two nuclei with 100 nucleons each, needs a net amount of energy needed equal to the difference in the above two steps. That is, the net amount of energy needed equals 1400-1600= -200 Me V. The minus sign means that energy is released in the process! This is the source of nuclear energy.

Let us summarize. We need (a lot of) energy to break up a heavy nucleus. A lot more energy is released when medium-heavy nuclei are formed. Hence, when a heavy nucleus breaks up into two medium-heavy nuclei, energy is released in the process. This is how energy is produced in nuclear fission. This energy powers nuclear reactors like those at Kalpakkam, as well as nuclear explosions, like those at Pokhran and Chagai last year. Looking at the binding energy of lighter nuclei, we can make an equally interesting statement. When two light nuclei (such as hydrogen) fuse together to form a slightly heavier nucleus, again, energy is released.

This is the basis of energy production n fusion reactions. This happens exactly as in the case of fission: at low A values, B increases with A and exactly the same argument as above can be used to show that more energy is released than absorbed. This is how energy is produced in the Sun.

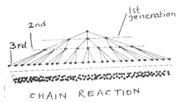

The chain reaction

We have seen that energy is produced when fission of a heavy nucleus takes place. When this energy is produced in controlled amounts, the process results in peaceful energy production in a nuclear reactor. When it is an under controlled process, an atom bomb results.

We know that if there are Z electrons in an atom, then there must be Z protons as well. Then there are A-Z neutrons in the nucleus. There must be at least as many neutrons as protons in a nucleus for providing the strong attractive force. Typically there are more and more neutrons for heavier atoms to account for the increasing repulsion between protons.

Hence, a big nucleus never just breaks up into two equal fragments. Since the lighter nuclei have a smaller percentage of neutrons, a few neutrons are simply freely released as well. These extra neutrons are crucial for continuing the fission process.

The most common element used in nuclear fission is Uranium, which has 235 nucleons in it. We shall denote this by 235U. It is called radioactive, meaning that is decays easily (and is not very stable).

Left to itself, nothing much would happen, just like the bowl of water on the table.

The analogue of shaking the bowl is to hit the Uranium with a neutron. What happen is that Uranium absorbs the neutron. As the neutron becomes part of the nucleus, it loses its blinding energy (of about 7 Me V). The Uranium nucleus acquires this energy. It is said to be in an excited state, with more energy than usual. This energy is enough for it to overcome the fission energy barrier.

The Uranium breaks up into two roughly equal fragments and few neutrons. In doing so, it releases energy, as we have already discussed. More importantly, it also releases neutrons. These neutrons then hit another neighboring Uranium nucleus. This nucleus fragments, releasing more energy and more free neutrons. These neutrons get absorbed by more Uranium nuclei, leading to a cascading effect. Hence energy production occurs by what is called a chain reaction.

A simplified understanding is obtained by considering the nucleus as a liquid droplet. When hit by a neutron, the drop shape is disturbed. The energy released by the absorbed neutron causes the drop to vibrate and stretch. The deformed nucleus is unstable, and ultimately breaks and reforms into two spherical (stable) droplets. Some neutrons are lost and the two fragments are driven apart with considerable force due to the repulsion between their protons.

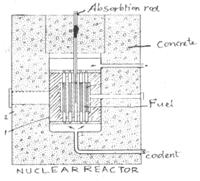

Producing power from fission

It is clear that, the faster the reactions take place, the faster will be the rate of energy production. If only a small number of interactions are allowed, what results is a controlled energy supply. This is what happens in a nuclear power reactor. Of course, the detailed engineering is extremely complex, but the principle is rather simple. Uranium is used as it occurs in its natural state. This consists of 99.3% 238U and 0.7% 235U. Only 235U undergoes thermal fission when it absorbs a slowly moving neutron. (238U can only fission when hit by fast neutrons). It is more efficient to have slow neutron fission; hence the neutrons that are produced are slowed down by moderators. Typical moderators are graphite rods, which alternate with Uranium rods in a reactor.

The process is started by one random accidental fission occurring. The neutrons coming out of one fission process pass through the graphite and get slowed down. These are absorbed by other 235U’s and the process continues. If too many neutrons are produced, the reaction occurs too quickly, and too much heat is produced; if too few neutrons are produced, the reaction will die out. The arrangement is made so that just about one neutron is produced per generation of interactions so that energy is slowly and steadily produced in the form of heat. This heat is taken away (usually by water in tubes surrounding the radioactive core region) through a heat exchanger and used to drive a turbine produces electricity from heat.

Nuclear explosives are so designed that the chain reaction is not controlled. For safety, the fuel is kept in lumps small enough that neutrons produced by random fissions are lost and not reabsorbed by other nuclei. For maximum efficiency, very pure 235U is used. Sometimes another radioactive nucleus called Plutonium (239Pu) is used as well.

A chemical explosive surrounds the nuclear fuel. When it is ignited, it explodes and drives the nuclear fuel inwards, together. As the lumps of fuel are brought together, a critical mass is reached.A chain reaction is then set up. Instead of restricting the number of neutrons produced per fission, the rate is allowed to increase uncontrolled. At a point when about hundred billion billion neutrons have been produced, the process reaches criticality and the fuel itself explodes.

Typically this happens about half a microsecond after the initial chemical explosive is ignited. Within 1/50 of a microsecond of achieving criticality, the entire fissile material breaks up, exploding into a massive fireball of energy and throwing the nuclear fragments apart. Since the fuel is dispersed, the chain reaction stops at once. Hence all the energy release in an atom bomb occurs in a split second of time. But the damage caused lasts for a long, long time. That is another story.

Destination Mars

K. Narayan KumarSPIC Mathematical Institute, Chennai

If you look up at the night sky you see a red planet shining majestically. That is Mars, Magala or Sevvai. It has been in news recently. A spacecraft sent by USA landed on Mars in early July and photographs sent back by craft have been appearing in newspapers and television news bulletins. Mars has fascinated man for thousands of year, since it was first identified. With the invention of the telescope, we were able to make detailed observations about the surface of Mars.

- The canals of Mars

About a hundred years ago, the Italian astronomer Giovanni Schiaparelli noticed several lines crisscrossing the surface of Mars. He called them canali or channels.

Mars and Earth

In English, canali was often incorrectly translated as canals. This led many people to speculate that the lines were artificial waterways, built by intelligent Martians. It was in this setting that H. G Wells wrote his famous story The War of the Worlds about the invasion of Earth by Martins.

A strong supporter of the intelligent Martial theory was the American astronomer Percival Lowell, who is famous for having predicated the existence of a ninth planet outside the orbit of Neptune. In the 1890s, Lowell claimed that he could see hundreds of such “canals” and that new canal were created regularly.

Strangely, most other astronomers of that time could not see such canals, even when they Lowell’s own telescopes! As the century progressed, the quality of telescopes improved and more detailed information about the surface of Mars and its atmosphere became available. It was observed that Mars has high volcanic mountains and large deep valleys.

In the 1960 and 70s, a number of spacecraft were sent towards Mars. Some of them merely flew past Mars sending back pictures while others (named Vikings) landed on Mars and sent back details about the surface of Mars and its atmosphere. The data obtained from these missions showed that Lowell was way off the mark. There is no liquid water on the Martian surface or its atmosphere. There are neither Martians nor canals.Yet, a number of facts make the study of Mars and its history interesting. Unlike other planets, Mars resembles the earth many ways. It has an atmosphere and its temperature is not too “extreme”, varying from - 1280 C at the poles at night to 170 C at the equator in the day. A Martian day is almost exactly as long as our day. Like the earth, Mars has seasons because its axis rotation is titled (see Satyameva Jayate Quiz, page 24).

Is there life on Mars

Moreover, Mars does have water, through all of it is either buried under the ground or frozen in polar icecaps. Water that flowed in the distant past has left behind deep valleys, exposing rocks and materials that were formed millions of years ago. This makes Mars an ideal place to study the origin of the solar system.

Today, the Martian atmosphere is very thin. At one time, it was as dense as our atmosphere of Mars reached its current state may give us valuable clues about the future of ours planet.Around the time the first living organisms evolved on earth, Mars was also a warm and wet place. Did life evolve on the Mars too?

Mission to Mars

Surprisingly, some evidence of life on Mars was found in Antarctica! While examining a meteorite on the icy continent, the American scientist David McKay and his colleagues found substances related to early forms of life (Science News, JM, Nov- Dec 1996). The composition of the meteorite indicated that it came from Mars.

Some scientists have this theory. Life did evolve on Mars and some of it got fossilised in rocks. Millions of years ago, a large meteorite crashed on mars. The resulting explosion threw out a small rock containing this organic fossil. This rock floated through space and fell on the earth as a meteorite. If this is true, it is also possible that life first evolved on Mars some other planet and arrived on earth riding on a meteorite!The current mission to Mars, named Path finder, is one among a number of spacecraft to be sent to Mars by the American National Aeronautics and Space Administration (NASA) to gather information that can help us verify or disprove such theories.

The Pathfinder spacecraft was launched in December, 1996 and reached Mars in July, 1997. After entering the atmosphere of Mars, a landing craft was launched which landed safely at a predetermined spot.

The difference between this craft and the earlier Viking missions is the presence of a mobile robot called Sojourner. Sojourner is a small mobile platform, about a foot tall, with six wheels. It is powered by solar energy during the day time and by battery in the night. It moves at a rather slow speed of about 1 cm per second. It carries cameras and other sensors and moves around the landing craft collecting data.

Scientist from earth control the movements of Sojourner based on the pictures that its cameras send back. The images are used to direct the robot to interesting parts of the terrain and to avoid obstacles. The robot is “intelligent” in the sense that it can decide whether to avoid an obstacle or climb over it without directions from earth. Further, when errors happen it is programmed to take some “reflex” actions.

The Pathfinder mission has sent back several pictures of the landscape of Mars around the landing site (see JM, Jul-Aug 1997). It has also gathered useful information about the composition of its rocks and atmosphere. It will take years to carefully analyze this data and see weather it confirms or contradicts our speculations about the past and future of both Mars and the earth.

The next spacecraft sent by NASA, called the Mars Global Surveyor, has already reached Mars. Unlike Pathfinder, this craft will not land Mars. Instead, it will orbit Mars about 250 km above the surface, sending back data the surface and atmosphere of Mars. It will be in a polar orbit- that is, its orbit will pass over the two poles. This will allow it to fly over different parts of the surface as it circles the planet. (What would happen if it were placed in an equatorial orbit?) The photographs sent by the Mars Global Surveyor will help in choosing landing sites for future missions. NASA expects that by 2025, man would have landed on Mars!

D D T (dichloro-diphenyl-trichloroethane)

The insecticide dichloro-diphenyl-trichloroethane (GESAROL), widely known as DDT, ant powder etc., is a synthetic insecticide of very high toxicity. Until recently, it was used world wide on a large scale. It was considered as a public health miracle because of its success in the prevention of malaria and other diseases spread by insects. DDT is prepared by the condensation of chlorobenzene and chloral hydrate in presence of sulfuric acid.

First synthesized by a German graduate student in 1873, it was rediscovered by Dr. Paul Mueller, a Swiss Chemist and entomologist, in 1939, He won a noble prize in 1948 for the rediscovery of DDT. DDT is a very stable compound. It is stable against acids but it becomes dehydrochlorinated in alkaline condition. Decomposition of DDT in air or ultraviolet light is rare, unfortunately, today it is this stability of DDT that is one of the biggest threat to our environment. It contaminates the fertile soil and also seeps through the upper crest of the earth to water table and contaminates the ground water. Grazing animals take in this DDT from the grass and water. It is then transferred to humans through meat and milk. DDT accumulates in the subcutaneous fat of human body and disrupts the delicate balance of sodium and potassium within neurons.

As a result or it, human beings suffer from delayed nerve conduction, immune system disorder, reproductive organ malfunction etc. since DDT collects in high levels in fatty tissues, they can be transmitted, along with vital nutrients to breast milk. Breast-fed babies in India and Zimbabwe absorb DDE (a highly persistent breakdown product of DDT) six times the acceptable daily intake.

Biological effects of DDT .

Even small amounts of DDT can affect small microorganisms, especially that live in the water (i.e. algae, and plankton), because the aquatic environment can bring more DDT in contact with these organisms. As an example of this high sensitivity, water that contains only 0.1 g of DDT per liter can slow down growth and photosynthesis in green algae. To get an idea of how dilute this water is, think about a paper clip, which weighs about 1 g. take that mass, and divide it by TEN million let’s say that this tiny amount of DDT was dissolved in a quarter gallon of water.

Remarkably, microorganism growth will be affected because of this! So, exactly how much DDT can our body tolerate before we should really start worrying? That depends on how much you weigh. At concentration above 236 mg DDT per kg of body weight, you’ll die. Concentration of 6-10 mg/kg leads to such symptons as headache, nausea, vomiting, confusion, and tremors. For fun, try and calculate how much DDT would be lethal for you.

DDT is banned in most of the countries today. You must be wondering why is it then surplusly available and widely used in our country. India and china are among the few countries where it is produced and used even today thought is is meant to be used against mosquitoes that transmit malaria and yellow fever and some other insects that spread diseases, it is indiscriminately used everywhere like inside the houses, food shops, on human and animal body etc. the biggest threat is from its use in agriculture where it is used against a wide spectrum of insects which are both useful and harmful. Also, the birds who feed on these insects accumulate a large amount in their body by feeding on insecticide sprayed land.

Even though DDT proved to be extremely effective against flies and mosquitoes once, its nondegradability and toxicity forces us to look for other alternatives now. Also, a large number of insects have developed resistance to this chemical over a period of time. Research to develop less harmful biopesticides, biological control of pests etc. to substitute DDT is under way for more than a decade, but not yet fully successful. Today, limited use of DDT, forming without chemicals (organic farming) etc. are welcome alternatives. It is yet another example of human invention which started off as the greatest triumph of science over nature and ended up as on environmental disaster. Next time you see someone using DDT without care, tell them about its harmful effects. Also, make sure that the vegetables and fruits are washed well before they are used.

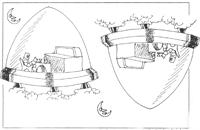

Artificial Gravity

- K.S. BalajiThe other school, ChennaiImagine that at some distant point in the future we decide to colonise a planet belonging to a star. The nearest star is some four light years away, so a one way trip will take considerably more than sour years. A more distant star will mean that several generations of astronauts will be born, and grow up in the space ship. Humans are not designed to live for long periods in zero gravity. We would like to create conditions as close to these on earth as possible, including gravity. This is achieved by having the ship accelerate at on ‘g’. with this acceleration, the front of the ship will be ‘up’ and the back ‘down’ all objects will fall (as seen from inside the ship) to the ‘ground’ if dropped, with an acceleration of one g, exactly as on earth. You could even have an artificial lake, which would stay stuck to the ground and not float up (you could go boating on it).

Halfway through the trip, when you are traveling at speeds very very close to that of light, you would do well to turn the spaceship around so that the boosters are firing in the direction in which you are traveling. This way, you slow down so that when you reach your star, you don’t shoot by at (almost) the speed of light. Further more, now that the direction of your acceleration has reversed, so has the direction of gravity in the ship. But your ship has also turned around, so your ground stays ground and your ‘sky’ stays ‘sky’.

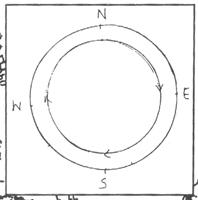

Suppose you are not traveling to a star but are planning on spending time in a space station that is hovering somewhere in the solar system. Can we create gravity in a ship that is not going some where? Yes! We will need to build a rotating space station, perhaps like the one shown in the drawing.

It is shaped like a torus (a tube like the one inside a car tire) which rotates around a central axis. We know that a point in uniform circular motion is accelerating toward the center of the circle. The outer rim will act as the ‘floor’ for the people inside the torus: they will be pressed against it. Like the bottom of the accelerating spaceship we described a little while ago, the outer rim is acceleration toward the central axis. To the people inside the big tube, it will be exactly as though there is a gravitational field, only pointing outward from the center. (According to Einstein, it is a gravitational field).

It is instructive to see this from two perspectives: if a person inside the space the space station were to drop an abject, what would happen? Seen from outside, we will say that the object has an initial tangential velocity, hence it will go in a straight line, hitting the ‘floor’ of the space station, which is rotating around. Inside the station, the person will simply see the object drop at her feet, and ascribe it to gravity.

Question: will the dropped object land at a point exactly below it? Write to us.

Read the novel ‘Rendezvous with Rama’ by Arthur C. Clarke and see the movie ‘2001: A Space Odyssey’.

- Where Does The Sun Rise?

All of us know that the sun rises in the East and sets in the West! Actually (Because the axis of rotation of the earth is not perpendicular to the line joining the sun & the earth) The EXACT position of sunrise & sunset depends on the latitude of the place (whether you are further up north as in New Delhi or in Chennai in the south). It also depends on the time of the year (summer or winter, For Example)

Here is the curve that the sun sweeps in the sky in New Delhi (Latitude - 28˚ North) in summer, the sun rises in the northeast & sets in the Northwest (sunrise & sunset times given are approximate).In winter, the sun always in the south.(the sun is always in the south at noon at such northern latitudes (greater than 23½˚).

In fact, as you go farther north from the equator, the movement of the sun in the sky becomes very noticeable, especially in summer. For example, see what a long path the sun traces in London in summer (latitude = 51˚North). That’s why summer days there last so long & winter days are short. Contrast this with Chennai (latitude = 13˚North). The length of the day does not very much through the year.

In short, as you go away from the equator, in summer, the sun rises farther & farther north. Finally, at the North pole, the sun never sets! You can think of this as the sun ‘rising’ in the north, circling to the south at Mid-day & ‘setting’ again in the north! Here, the ‘day’ lasts 6 months. (Followed by 6 months of ‘Night’)

Illusions

-G.S RanganathRaman Research Institute, Bangalore.

Basically, the eye is like a camera with an image-forming lens in front of a screen. This “analysis” of eye vision is enough to appreciate certain perplexing optical illusions.

The “screen” of the eye is the retina. Most striking is its shape- it is nearly spherical. When focused, an extended object forms a curved image. The retina is curved to accommodate this distortion of the image.

On this curved surface are photoreceptor cells. Light from the crystalline lens has to go through a tangle of optical nerves before reaching the photoreceptors.

There are two types of photoreceptors, the rods and the cones, so named because of their external appearances. They are not evenly distributed throughout the retina. Rods are nearly absent at the center and increase in destiny as one goes to the periphery. On the other hand cones are concentrated near the center and are almost absent at the periphery. In other words, we have a cone-rich centre surrounded by a rod-rich periphery.

The rod cells respond only at a low level of light, while the cones are active only at high levels of illumination. So night vision is mostly through the rods while day vision is confined to the cones. The rods and the cones also do not respond the same way to colours. The road cells hardly “see” any colour, but for a low response in the blue. On the other hand the cones respond to colours.In fact on can picture them as belonging to three different types, responding in the blue, green and red regions of the visible spectrum. The discrimination in road and cones response together with their distribution on the retina results in a few interesting illusions.

A photoreceptor remains “dead” for some time after receiving a signal. In other words it is “fatigued”. This mechanism can result in intensity and colour being assessed wrongly. This fatigue leads to a problem which we all know very well: a room looks darker if we enter it from the sunshine. The retina is fatigued by the bright light and it is inactive to receive any light that is present in the room. After some time the photoreceptors regain their original state and are “ready” to receive light.

Fatigue can also result in what is termed as an after image. If one sees a bright object for some time and then shifts the gaze to a white wall, then against a white background a “dark” image of the object is seen. The portion of the retina that had received the image is now “sort of dead” and inactive while the rest of the retina is fully operative. This is generally called the negative after image in view of its complementary nature.

But there are also coloured after images. If one stares at a red object against a white background for some time and then removes the object, a greenish image of the object is seen in the same place. Of the three types of cones in the region of the image, the red sensing cones get fatigued and are thus inactive. Then that particular region can see only a complementary hue: bluish-green. Can you guess now why doctors and nurses wear a bluish-green dress in operation theaters? A rotary disc with a notch (Figure1) brings out this effect beautifully. With a green-coloured lamp behind the notch, the lamp appears green rotating one way, but reddish turning in the other direction. Which sense of rotation will give you a green color? Why? The interesting aspect of coloured after images is that the three different types of cones do not have the same amount of “fatigue”. There is an interesting toy which exploits this illusion. Its beauty is so impressive that even the Nobel Prize winner Richard Feynman discuss it in the famous Feynman Lectures on physics.

A pattern of the type shown in Figure 2 is fixed to a rotating disc. When the disc is rotated, on of the darker rings appear coloured with one colour and the other with another. The colour depends on the speed of the rotation, on the brightness of illumination and often on who sees it. This can be understood as a complicated manifestation of the different cones relaxing at different rates after having been exposed to white light. Many variations of this have been designed. Enhanced colour dynamics can be experienced when seen under a fluorescent lamp. You can very easily make these discs yourself. Do write to Jantar Mantar what your experience was with these rotating discs.

E = mc2

Albert EinsteinWritten for a popular science magazine,

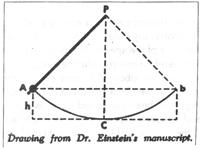

Science illustrated, April 1946

In order to understand the law of the equiva lence of mass and energy, we must go back to two conservation or “balance” principles which, independent of each other, held a high place in pre-relativity physics. These were the principle of the conservation of energy and the principle of the conservation of mass. The first of these, advanced by Leibniz as long as ago as the 17th century, was developed in the 19th century essentially as a corollary of a principle of mechanics. Consider, for example, a pendulum whose mass swings back and forth between the points A and B. At these points the mass is higher by the amount h than it is at C, the lowest point of the path (see drawing). At C, On the other hand, the lifting height has disappeared and instead of it the mass has a velocity v. It is as though the lifting height could be converted entirely into velocity, and vice versa.

The exact relation would be expressed as mgh = ½ mv2, with g representing the acceleration of gravity. What is interesting here is that this relation is independent of both the length of the pendulum and the form of the path through which the mass moves. The significance is that something remains constant through out the process, and that something is energy. At A and at B it is an energy of position, or “potential” energy; at C it is an energy of motion, or “kinetic” energy. If this concept is correct, then the sum mgh + mv2 must have the same value for any position of the pendulum, if h is understood to represent the height above C, and v the velocity at that point in the pendulum’s path. And such is found to be actually the case. The generalization of this principle gives us the law of the conservation of mechanical energy. But what happens when friction stops the pendulum? The answer to that was found in the study of heat phenomena.

This study, based on the assumption that heat is an indestructible substance which flows from a warmer to a colder object, seemed to give us a principle of the “conservation of heat”. On the other hand, from time immemorial it has been known that heat could be produced by friction, as in the fire-making drills of the American Indians. The physicists were for long unable to account for this kind of heat “production”. Their difficulties were overcome only when it was successfully established that, for any given amount of heat produced by friction, an exactly proportional amount of energy had to be expended. Thus did we arrive at a principle of the “equivalence of work and heat”. With our Pendulum, for example, mechanical energy is gradually converted by friction into heat.

In such fashion the principles of the conservation of mechanical and thermal energies were merged into one. The physicists were thereupon persuaded that the conservation principle could be further extended to take in chemical and electromagnetic processes- in short, could be applied to all fields. It appeared that in our physical system there was a sum total of energies that remained constant through all changes that might occur. Now for the principle of conservation of mass. Mass is defined as the resistance that a body opposes to its acceleration (inert mass). It is also measured by the weight of the body (heavy mass). That these two radically different definitions lead to the same value for the mass of a body is, in itself, an astonishing fact.

According to the principle – namely, that masses remain unchanged under any physical or chemical changes – the mass appeared to be the essential (because unvarying) quality of matter. Heating, melting, vaporization, or combining into chemical compounds would not change the total mass. Physicists accepted this principle up to a few decades ago. But it proved inadequate in the face of the special theory of relativity. It was therefore merged with the energy principle- just as, about 60 years before, the principle of the conservation of heat.

We might say that the principle of the conservation of energy, having previously swallowed up that of the conservation of heat, now proceeded to swallow that of the conservation of mass- and holds the field alone. It is customary to express the equivalence of mass and energy (though somewhat inexactly) by the formula = mc2, in which c represents the velocity of light, about 300,000 kilometres per second. E is the energy that is contained in a stationary body; m is its mass. The energy that belongs to the mass m is equal to this mass, multiplied by the square of the enormous speed of light- which is to say, a vast amount of energy for every unit of mass. But if very gram of material contains this tremendous energy, why did it so long go unnoticed? The answer is simple enough: so long as none of the energy is given off externally, it cannot be observed .It is as though a man who is fabulously rich should never spend or give away a cent; no one could tell how rich he was.

Now we can reverse the relation and say that an increase of E in the amount of energy must be accompanied by and increase of E /(c2) in the mass. I can easily supply energy to the mass- for instance; I can heat it by 10 degrees. So why not measure the mass increases; or weight increase, connected with this change? The trouble here is that in the mass increase the enormous factor c2 occurs in the denominator of the fraction.